Fire navigation research presented at NM Legislature

Firefighters entering a burning building step into a critically risky and potentially lethal environment. Smoke and flames combined with unfamiliar environments, compounded by stress and anxiety complicate accurate decision making. How a firefighter handles this combination of factors is a matter of life or death.

Firefighters entering a burning building step into a critically risky and potentially lethal environment. Smoke and flames combined with unfamiliar environments, compounded by stress and anxiety complicate accurate decision making. How a firefighter handles this combination of factors is a matter of life or death.

(In the news: Read the stories and see the videos.

UNM Students Research Presentation at Graduate Education Day at the New Mexico State Capitol

KOB News: UNM researcher develops life-saving technology for firefighters

KRQE: UNM student developing technology to save lives of firefighters

Daily Lobo:Student develops tech that could save firefighters' lives)

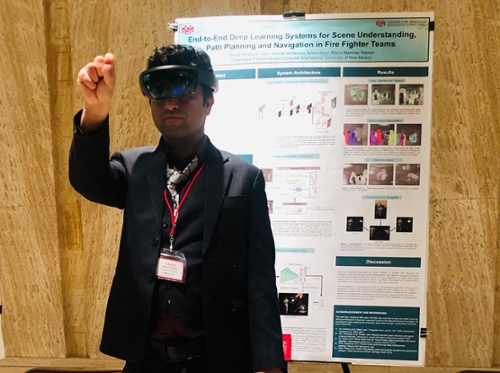

University of New Mexico Electrical and Computer Engineering (ECE) student Manish Bhattarai presented a project he has been working on to the New Mexico Legislature on February 11. End-to-End Training of Deep Learning Systems for Scene Understanding, Path Planning and Navigation, 3D Scene Reconstruction is innovative research aimed at preventing injury and deaths in a fire.

“The audience at the conference, especially the firefighters present, were really impressed with what we are doing. They were particularly interested in the deployment of this technology in fighting wildfires like the recent deadly Camp and Woolsey Fires that claimed 31 lives. This work has also been presented to a firefighter team in the past. They really appreciated the novelty of the proposed system,” Bhattarai said.

Firefighting is a dynamic activity with many operations occurring simultaneously. Fires create actively changing environmental conditions. Within such an environment, the system being designed needs to be able to inform firefighters of the best possible movement options available to them while accounting for these real-time changes, he explained.

Maintaining situational awareness, a knowledge of current conditions and activities at the scene, is critical to accurate decision-making, Bhattarai said. Firefighters often carry various sensors in their personal equipment such as thermal cameras, gas sensors, and microphones. Improved data processing techniques would mine this data more effectively and use it to improve situational awareness while a rescue/ firefighting operation is occurring. The enhanced awareness would improve real-time firefighting decision-making and minimize errors in judgment induced by environmental conditions and anxiety levels.

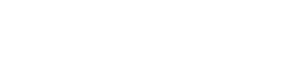

“We employ a deep-learning based system for image classification, object detection and tracking, path planning and navigation, path reconstruction, scene segmentation, estimation of firefighter condition, and Natural Language Processing for informing firefighters about the scene,” Bhattarai said.

As firefighters move through a fire, the various sensors would pick up and relay information, including thermal imagery, IMU measurements, and a firefighter’s stress levels, to a server. The server would employ the algorithm being developed and tested on UNM Center for Advanced Research Computing (CARC) machines to process the collected information and calculate the best paths to take to find victims and exit the scene.

In turn, the server will relay information back to them, similar to a GPS system, telling them the location of doorways, windows, and most importantly, people that would otherwise not be visible through smoke and flames. The information would allow firefighters to successfully navigate through a fire scene to find any people in the fire- and smoke-filled rooms and then back to safety. The system will also show firefighters where there are safe areas to retreat with victims.

“The system makes the decisions. It’s precise. It makes almost no errors,” unlike firefighters who are trying to deal with not only the physical obstacles of the scene but also their own stress levels and anxiety, Bhattarai explained.

So far Bhattarai’s work has been on the computer, using CARC systems to process a dataset recorded at the Santa Fe firefighting facility with a thermal camera. The recorded dataset comprises more than 100,000 infrared images. This massive dataset needs a sophisticated deep learning model to accurately classify, detect and track objects. He uses CARC resources to run hundreds of models of various fire scenarios that would be too time-consuming on even a well-equipped desktop system, Bhattarai said.

“CARC’s high-performance computing hardware is ideal for this type of analysis,” he said. Bhattarai is entering his second semester as a graduate assistant employed at CARC, where he uses his experience to assist other users with their research.

So far, the project is in experimental and developmental stages and the actual fire navigation equipment a firefighter would carry has not been designed yet, Bhattarai noted. But he expects firefighters’ existing head-mounted gear would be fitted with two to three thermal cameras and a self-contained breathing apparatus (SCBA) microphone. The body gear will have inertial measurement units (IMUs) that measure a body’s movement, along with a portable processor board whose outputs could be displayed on a tablet screen.

“Once the system is fully tested and all of the kinks are addressed then it will come into production. We are also looking forward to collaborating with a company currently developing systems for firefighters to partner with to improve the feasibility and efficacy of the production of our system,” Bhattarai said, adding, “We are actively working to make this technology accessible to firefighters in the upcoming year.”